A recent study tested AI detection tools on student work that had only been lightly edited. The tools were accurate just 72% of the time.

If you run the same content through a paraphrasing tool twice, the detection rate drops to 31%. In other words, seven out of ten pieces go undetected.

It gets even more concerning. Non-native English speakers are flagged 40% more often than native speakers, even when their writing is completely original. I’ve seen college students accused of cheating just because their writing style is formal. One detector labeled a philosophy major’s thesis as “87% AI-generated.” She never used ChatGPT.

Today’s AI detectors examine factors such as word choice and sentence rhythm. Tools such as GPTZero claim 99% accuracy. Originality. AI describes itself as the “gold standard.” Winston AI says it is ready to be used as court evidence.

Do any of them actually work? Let’s dig into what these tools can and can’t do, which ones perform best in real testing, and when you should (and shouldn’t) trust their results.

How AI Detection Technology Works

Imagine AI detection as trying to spot a forgery. Instead of searching for one clear mistake, you look for lots of small details that seem off.

These tools measure two main things: perplexity and burstiness. Perplexity tracks how predictable your word choices are. ChatGPT picks words based on probability. It goes for safe, common options. Humans? We throw in unexpected vocabulary. We use slang. We coin new phrases. When every word choice feels “logical,” detectors get suspicious.

Burstiness looks at the rhythm of sentences. Some people write a short sentence, then a longer one that flows into a much more detailed explanation before circling back to something brief? That’s burstiness. AI tends to produce sentences of about the same length, which can feel like listening to a metronome rather than real music.

Modern detectors also check your text against huge databases of known AI writing. They look for signs of GPT-4, Claude, or Gemini. But as new AI versions keep changing, detection becomes a never-ending game of trying to keep up.

Top 5 AI Detection Tools Compared (2026)

I spent two weeks testing these tools on everything from college essays to marketing copy. Here’s what actually happened:

| Tool | Accuracy | False Positive Rate | Best For | Starting Price |

|---|---|---|---|---|

| GPTZero | 99.30% | 0.24% | Educators | Free (10K words/mo) |

| Originality.AI | 94-98% | 14.30% | Publishers | $14.95/month |

| Winston AI | 95-99% | 3-5% | Academic institutions | $12/month |

| Copyleaks | 99%+ | 1-2% | Enterprise/multilingual | Custom pricing |

| Turnitin | 98% | 2-5% | Universities | Institutional only |

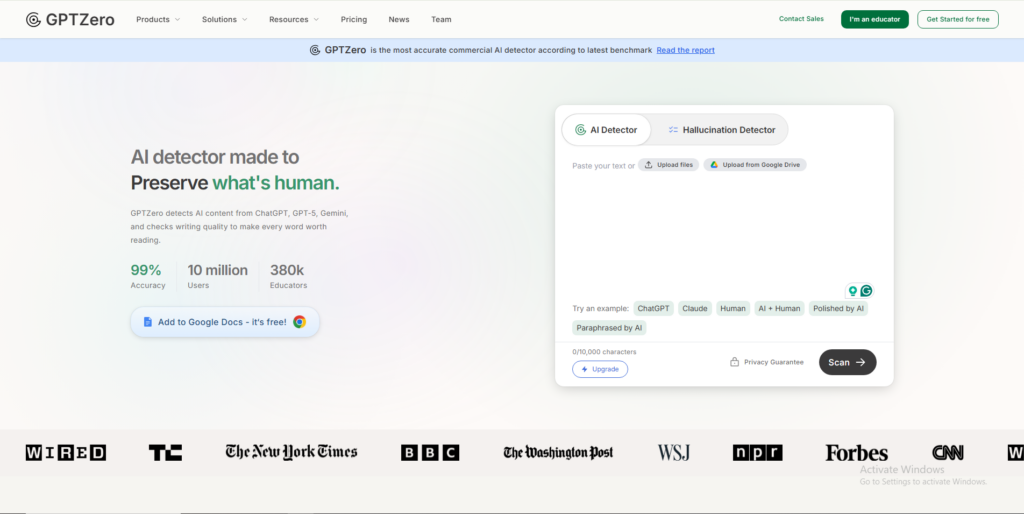

GPTZero – Best for Educators

GPTZero independent testing shows it correctly identifies 99.3% of AI content while falsely flagging human writing only 0.24% of the time. That’s huge when you’re dealing with students’ futures.

I tested it on essays from three different writing styles: formal academic, conversational blog posts, and creative fiction. It handled all three without going haywire. The free tier gives you 10,000 words monthly, which covers most teachers’ needs for spot-checking assignments.

What really sets it apart? Minimal bias against ESL students. Other detectors flag non-native speakers at way higher rates, but GPTZero shows you it’s working on fixing that problem. Not perfectly – no tool is – but noticeably better than competitors.

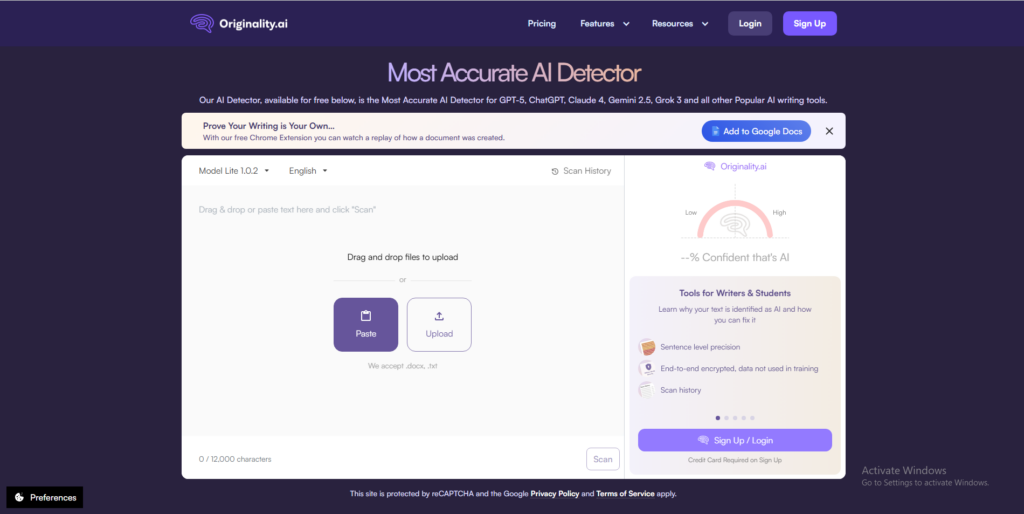

Originality.AI – Best for Content Teams

Publishers love Originality.AI because it’s aggressive. It catches AI content that other tools miss. But that aggressiveness comes at a price: a 14.3% false-positive rate.

That means roughly 1 in 7 human-written articles gets flagged. Ouch.

I watched a content manager test fifty freelance submissions. Originality.AI flagged seven pieces that turned out to be completely legit. But it also caught three sneaky ones that GPTZero missed. So which matters more – catching every AI piece or avoiding false accusations?

For publishers worried about Google penalties, they’d rather investigate false flags than miss AI spam. The tool bundles plagiarism checking and readability scoring, which makes it worth the trade-off if you’re running a large content operation.

Winston AI – Best for Academic Institutions

Winston AI claims 99.98% accuracy. Real-world testing puts it closer to 95-99%, which is still solid but not miraculous.

What universities like is the transparency. Winston shows you exactly which sentences probably came from AI instead of just throwing out a percentage. You can actually discuss specific passages with students rather than making vague accusations.

It struggles more with technical writing, though. I ran three computer science papers through it, and all got flagged at 60%+, even though they were original work. Formal academic prose in STEM fields triggers false positives more often.

Pricing starts at $12 monthly for 80,000 words. That makes it affordable for entire departments, not just individual professors.

Copyleaks – Best Multilingual Support

Copyleaks works across 30+ languages. In testing, it correctly identified all 126 sample documents with zero errors on unmodified AI text. That’s impressive.

The catch? Paraphrasing still breaks it. Accuracy drops to about 50% on heavily edited content, similar to most detectors. But if you’re checking content in Spanish, French, German, or any of the two dozen other languages, this is your best option.

It integrates smoothly with learning management systems and offers robust API options. Organizations with high-volume needs should negotiate custom pricing – the credit system (1 credit = 1 word) gets expensive fast at scale.

Turnitin – Best Institutional Standard

Turnitin dominates higher education because schools already use it for plagiarism detection. When they added AI detection in April 2023, adoption was instant.

Claims? 98% accuracy with under 1% false positives. Reality? Independent testing shows false-positive rates ranging from 2% to 5%. That’s still pretty good, but it’s led to enough student complaints that some institutions have disabled the feature.

You need at least 300 words for analysis, and they only sell institutional licenses. No individual subscriptions available.

The Accuracy Problem?

Let’s talk about that 99% accuracy claim. Sounds great, right? Until you do the math.

In a school with 2,000 students, 99% accuracy means 20 false accusations. In a content team publishing 100 articles monthly, that’s one innocent writer falsely flagged every single month. Are you comfortable with that?

The false-positive problem hits certain groups much harder. Studies show non-native English speakers face accusations at rates 40-60% higher than native speakers. A friend from Korea wrote her entire dissertation by hand – no AI involved – and still got flagged at 73%. Why? Her formal, structured writing matched AI patterns.

If you naturally write in methodical, consistent patterns, detectors see that as suspicious. The same goes for technical writers whose precise language resembles machine output.

And then there’s the cat-and-mouse game. When GPT-4 launched, existing detectors struggled hard. The same thing happened with Claude 3.5 and Gemini 2.5. Detectors eventually catch up, but there’s always a window where accuracy tanks.

Want to know the easiest way to beat any detector? Paraphrasing. According to a 2024 University of Maryland study, detection rates fall below 40% after text has run through decent paraphrasing tools. Someone determined to cheat can hide it. Meanwhile, honest writers get caught in the crossfire.

Making AI-Assisted Content More Human

Got flagged even though you wrote everything yourself? Or maybe you used AI for research and want to make sure the final version passes inspection?

Here’s how.

Add personal experience. AI can’t tell stories about that time you accidentally deleted a client’s entire database. It can’t describe the specific smell of your grandmother’s kitchen. Inject real, lived experience into your writing. Those details are impossible to fake.

Mix up your sentences deliberately. Write one short sentence. Then follow it with something longer that explains the concept in more detail, maybe throwing in a dependent clause or two before you wrap it up. Then go short again. See the rhythm? That’s what humans do naturally.

Kill the AI tells. Every time you write “delve,” “robust,” “comprehensive,” or “landscape” (used metaphorically), delete it. Replace with simpler words. Read your work aloud – if it sounds like a textbook, rewrite it.

Here’s the real test, though: can you discuss your content naturally without looking at it? If someone asks you to explain a key point and you fumble, that’s a sign you didn’t really write it. AI detection aside, that’s the actual problem worth solving.

Final Thoughts

AI detectors serve an important purpose. They help catch spam, verify the authenticity of content, and maintain academic standards. But they’re tools, not judges.

The smartest approach? Use detectors to identify content worth investigating. Look for actual warning signs: generic insights, lack of specific examples, formulaic structure. But never accuse someone of wrongdoing based solely on a percentage.

For writers, understanding detection helps you avoid false accusations. Add personal voice. Vary your style. Include specific details AI can’t generate. If you’re using AI legitimately for research, heavy editing ensures your expertise shines through.

As AI technology evolves, detection methods will continue to adapt. What works today might fail tomorrow. Stay informed about both sides. And remember: the real goal isn’t to eliminate AI entirely – it’s to ensure genuine human expertise, original thinking, and honest work.

Need reliable hosting that supports your content strategy? BigCloudy’s WordPress hosting provides the speed and performance you need for content that ranks naturally. And if you’re exploring content creation with AI, our AI Website Builder helps you create authentic content that connects with real readers while maintaining your unique voice.

FAQs

Nope. Even the best tools hit 95-99% accuracy on pure ChatGPT output. Once someone edits the text or mixes it with human writing, accuracy drops fast. No detector offers 100% certainty.

False positives happen when your style resembles AI patterns. Formal academic tone, consistent sentences, and technical vocabulary all trigger detectors. Non-native speakers face higher false-positive rates because simpler grammatical structures can be mistaken for AI-generated writing.

GPTZero’s free tier (10,000 words monthly) offers the best accuracy at 99.3%. Quillbot has unlimited free scanning, but accuracy drops to around 50%. For occasional checks, stick with GPTZero.

Significantly less accurate. Most detectors train primarily on English content. Copyleaks handles 30+ languages well, but false-positive rates increase for less common languages. GPTZero and Winston focus mainly on English.

Both. It’s an arms race. Detection tech gets better, but AI models also evolve to sound more human. Future detection will likely rely more on metadata and behavioral patterns over time than on analyzing text alone.

For high-stakes decisions, yes. Different tools weigh different signals. If GPTZero shows 15% AI, Originality.AI shows 90% AI, and Winston shows 40% AI, those conflicting results tell you to look closer rather than automatically rejecting the work.